Explainable Artificial Intelligence (XAI): A Reason to Believe?

Artificial Intelligence (AI) is revolutionizing industries from healthcare to finance, transforming how we live and work. Yet, despite its vast potential, AI often remains a black box, making decisions that are difficult to interpret or understand. This lack of transparency can lead to mistrust, misuse, and even ethical concerns. Enter Explainable Artificial Intelligence (XAI), a burgeoning field dedicated to making AI systems more transparent and understandable. But is XAI the solution we need to trust AI fully? Let’s explore.

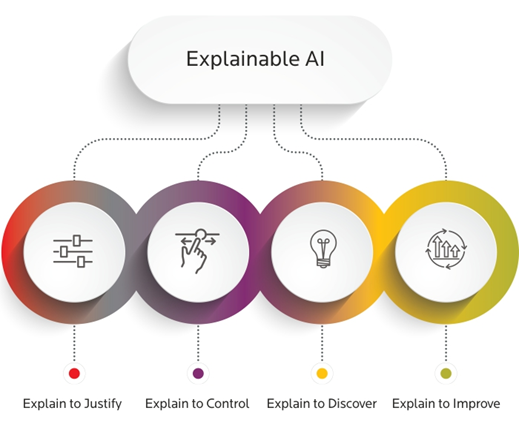

What is Explainable AI?

Explainable AI refers to methods and techniques in AI that allow human users to comprehend and trust the results and output created by machine learning algorithms. XAI aims to make AI decision-making processes transparent and interpretable, ensuring that users can understand why a model made a particular decision. This is crucial in sensitive areas like healthcare, finance, and law, where AI decisions can have significant consequences.

The Need for Explainability

AI systems are increasingly used to support or even replace human decision-making. For example, in the medical field, AI algorithms can analyze medical images to detect diseases like cancer. However, if these algorithms are opaque, doctors might hesitate to trust them, especially if the AI’s decisions contradict their own expertise. Explainability helps bridge this trust gap by providing insights into how and why an AI system reaches its conclusions.

Examples of XAI in Action

1. Healthcare: Consider an AI system used to predict patient readmission rates. If a hospital uses this system to decide which patients receive follow-up care, understanding the factors influencing these predictions is essential. XAI can identify whether the AI bases its predictions on relevant medical history, lab results, or other critical factors, helping healthcare providers make informed decisions.

2. Finance: In the financial sector, AI models can assess credit risk or detect fraudulent transactions. For instance, if an AI denies a loan application, XAI can clarify whether the decision was due to the applicant’s credit history, income level, or other factors. This transparency not only helps applicants understand and potentially improve their financial health but also ensures regulatory compliance.

3. Legal: AI tools are increasingly used in legal settings for tasks such as reviewing documents or predicting case outcomes. If a predictive model suggests a particular case strategy, lawyers need to understand the rationale behind the suggestion. XAI can provide explanations that highlight the relevant legal precedents or evidence considered by the model.

Techniques in XAI

Several techniques are used to enhance AI explainability:

– Feature Importance: This method identifies which features (or input variables) most influence the model’s predictions. For example, in a model predicting loan defaults, feature importance analysis might reveal that income level and credit history are the most critical factors.

– Model-Agnostic Methods: Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) provide explanations for any machine learning model by approximating it with a simpler, interpretable model. These methods can highlight which features contributed most to a particular decision.

– Visualizations: Tools such as decision trees or partial dependence plots can visually represent how input features influence predictions, making it easier for users to understand the model’s behavior.

Challenges and Future Directions

While XAI holds great promise, it is not without challenges. Achieving a balance between model accuracy and interpretability can be difficult, as more complex models often yield better performance but are harder to explain. Additionally, different users may require different levels of explanation, and what is considered understandable can vary widely.

Moreover, ensuring that explanations are not only accurate but also truthful and not misleading is a critical concern. As XAI techniques advance, it will be essential to develop standardized methods for evaluating the quality of explanations.

Explainable AI represents a significant step towards building trust and accountability in AI systems. By shedding light on the inner workings of AI models, XAI helps ensure that these systems are used responsibly and ethically. As we continue to integrate AI into critical aspects of our lives, the need for transparency and explainability will only grow. XAI offers a compelling reason to believe in the promise of AI, making it an essential component of the future of artificial intelligence.

In conclusion, while challenges remain, the development and implementation of XAI can pave the way for more trustworthy and reliable AI systems, fostering a future where humans and machines can collaborate more effectively and confidently.

Related News

Easy Access to English Subject Related Materials for Grade 6 – 9

The Ministry of Education has created platform where students in grade 6 to 9 can easily access a wide range of English…

Read MoreStudents allowed to use SLTB November season ticket this month

Schoolchildren have been granted the facility to travel this month on Sri Lanka Transport Board buses using the November season ticket. Accordingly,…

Read MoreEducation Ministry Launches ‘Prathishta’ Initiative to Rebuild Disaster-Affected Schools

The Ministry of Education has launched a new initiative, titled "Prathishta" to rebuild schools damaged by the recent cyclone and related disasters,…

Read MoreRising School Dropouts and Eroding Trust Challenge Sri Lanka’s Education Goals

A growing number of school dropouts, disruptions to examinations, and declining parental confidence in the school system have emerged as major obstacles…

Read MoreNew Initiative Boosts Children’s Mental Well-Being in Relief Shelters

A new programme has been introduced to support the mental health and emotional wellbeing of children living in temporary relief centres in…

Read MoreCourses

-

MBA in Project Management & Artificial Intelligence – Oxford College of Business

In an era defined by rapid technological change, organizations increasingly demand leaders who not only understand traditional project management, but can also… -

Scholarships for 2025 Postgraduate Diploma in Education for SLEAS and SLTES Officers

The Ministry of Education, Higher Education and Vocational Education has announced the granting of full scholarships for the one-year weekend Postgraduate Diploma… -

Shape Your Future with a BSc in Business Management (HRM) at Horizon Campus

Human Resource Management is more than a career. It’s about growing people, building organizational culture, and leading with purpose. Every impactful journey… -

ESOFT UNI Signs MoU with Box Gill Institute, Australia

ESOFt UNI recently hosted a formal Memorandum of Understanding (MoU) signing ceremony with Box Hill Institute, Australia, signaling a significant step in… -

Ace Your University Interview in Sri Lanka: A Guide with Examples

Getting into a Sri Lankan sate or non-state university is not just about the scores. For some universities' programmes, your personality, communication… -

MCW Global Young Leaders Fellowship 2026

MCW Global (Miracle Corners of the World) runs a Young Leaders Fellowship, a year-long leadership program for young people (18–26) around the… -

Enhance Your Arabic Skills with the Intermediate Language Course at BCIS

BCIS invites learners to join its Intermediate Arabic Language Course this November and further develop both linguistic skills and cultural understanding. Designed… -

Achieve Your American Dream : NCHS Spring Intake Webinar

NCHS is paving the way for Sri Lankan students to achieve their American Dream. As Sri Lanka’s leading pathway provider to the… -

National Diploma in Teaching course : Notice

A Gazette notice has been released recently, concerning the enrollment of aspiring teachers into National Colleges of Education for the three-year pre-service… -

IMC Education Features Largest Student Recruitment for QIU’s October 2025 Intake

Quest International University (QIU), Malaysia recently hosted a pre-departure briefing and high tea at the Shangri-La Hotel in Colombo for its incoming… -

Global University Employability Ranking according to Times Higher Education

Attending college or university offers more than just career preparation, though selecting the right school and program can significantly enhance your job… -

Diploma in Occupational Safety & Health (DOSH) – CIPM

The Chartered Institute of Personnel Management (CIPM) is proud to announce the launch of its Diploma in Occupational Safety & Health (DOSH),… -

Small Grant Scheme for Australia Awards Alumni Sri Lanka

Australia Awards alumni are warmly invited to apply for a grant up to AUD 5,000 to support an innovative project that aim… -

PIM Launches Special Programme for Newly Promoted SriLankan Airlines Managers

The Postgraduate Institute of Management (PIM) has launched a dedicated Newly Promoted Manager Programme designed to strengthen the leadership and management capabilities… -

IMC – Bachelor of Psychology

IMC Education Overview IMC Campus in partnership with Lincoln University College (LUC) Malaysia offers Bachelor of Psychology Degree right here in Sri…

Newswire

-

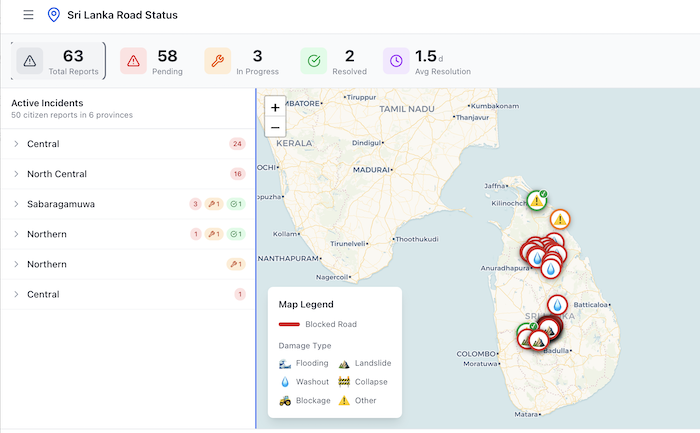

Nawalapitiya–Kandy Road reopens after 18 days

ON: December 15, 2025 -

‘Felt numb after reaching Delhi’: Man compares Sri Lanka with India

ON: December 15, 2025 -

Suspect nabbed with Rs. 200 Mn Kush haul

ON: December 15, 2025 -

Students allowed to use SLTB November season ticket this month

ON: December 15, 2025 -

A new public platform to report road conditions : Transport Minister

ON: December 15, 2025